Neue Bücher für dich

Bücher Vorschläge

Beliebte Kinderbuchreihen

Beliebte Jugendbücher

Beliebte Liebesromane

Beliebte Krimis

Aus unserem Magazin

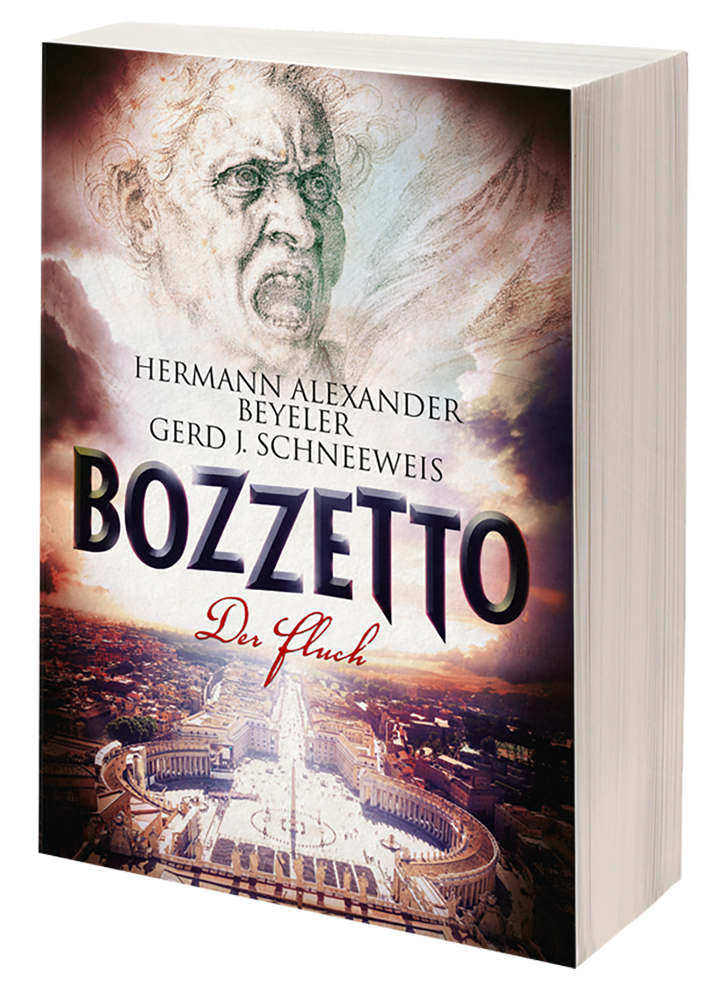

Rezension: „Bozzetto“ von Gerd J. Schneeweis und Hermann Alexander Beyeler

Im Buch „Bozzetto“ von Gerd J. Schneeweis und Hermann Alexander Beyeler geht es um einen Entwurf für das Gemälde „Jüngstes Gericht“ von Michelangelo, den dieser zunächst auf einer kleinen Holztafel

Mit der richtigen Fachlektüre im E-Commerce durchstarten

In der heutigen Zeit ist es nicht mehr all zu kompliziert, mit einem eigenen Onlineshop zu starten. So gibt es zum Beispiel Baukästen, einfache Shopsysteme und ähnliches. Ob es sich

Willkommen in der Bücherstube! Hier kannst du Bücher online kaufen

Es hat viele Vorteile, mehr Bücher zu lesen. In erster Linie kann es dir helfen, neue Dinge zu lernen. Indem du über eine Vielzahl von Themen liest, kannst du Wissen erwerben und deinen Horizont erweitern. Außerdem können Bücher dir helfen, deine Schreibfähigkeiten zu verbessern, indem du lernst, wie du deine Gedanken und Argumente klar und prägnant strukturierst. Und schließlich ist das Lesen von Büchern eine gute Möglichkeit, sich zu entspannen und dem Stress des Alltags zu entfliehen. Das Angebot von ragnor.de wurde hier integriert.

Bei uns findest du die absoluten Bestseller, vom Liebesroman bis hin zum klassischen Krimi. Stöber doch einfach mal in Ruhe rum und lass dich inspirieren!